AI is a big topic these days. One reason for this is the fear of AIs acting against human values, potentially causing severe consequences. However, there’s an even bigger challenge than aligning AIs: Let’s look in the mirror.

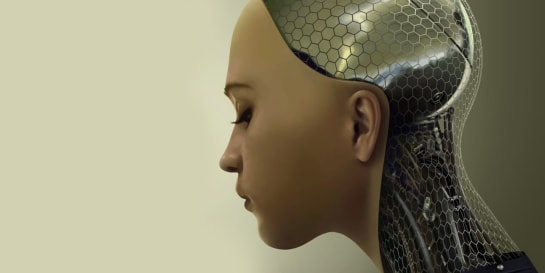

Today’s Large Language Models (LLMs) like GPT seem to be close to the level of human intelligence already. If I use OpenAI’s ChatGPT 3.5 or 4, I can have serious conversations about a wide range of topics with it.

Well, it’s not really a conversation since I never get asked a question back by the AI. It’s one-sided, to be honest. Additionally, there’s a point when its limits become apparent: It starts to “forget” earlier context of the chat (due to its limited token size). When continuity and consistency get broken, I’m reminded that the intelligence is artificial.

Overall, it feels like talking to a highly intelligent, yet pretty dement being.

It takes some time though, to uncover inconsistency and failing continuity. I’ve seen other humans feed short GPT-generated intelligent output into other AIs that turn text into believable audio or video. The combination of intelligent and believable in this context means that it takes me, a 44-year-old trained technology and strategy expert, intentional mental effort to identify it as what it is: artificial. If I’m running on autopilot and don’t pay attention, I’ll buy it as real.

So, even with all current limitations of single AIs, there’s reason for serious concern about the power that comes with Artificial Intelligences. (Yes, plural.)

A Matter of Trust ¶

These concerns come from a simple fact: It’s the first time in history of humankind that even experts don’t know how a globally deployed technology really works. Usually, people who don’t know just resort to trusting people who, in fact, do know. Yet in the case of today’s Large Language Models, we don’t know and can’t even fall back to trusting anyone else—because literally nobody really knows.

That’s where the AI Alignment comes in. If we can’t know the inner workings or can’t trust another human who knows, is there a way to implement at least some safeguards?

Sure. The theoretical solution is to align the thing that we don’t understand with our values. In more practical terms, this means that both humans and machines put the same value on the same outcomes. For example, if humans put high value on their survival, machines will put equally high value on the humans’ survival. Shared core values will generate compatible intentions, and resulting outcomes will complement each other. In such a scenario, there’s simply no need to fully understand the inner workings of that thing we don’t understand.

There are a few challenges on the way of practically rolling out this solution, however:

First, how can we reliably implement shared core values in a machine that nobody really understands? That’s a tricky one. Have we even defined to the letter what those core values would be?

Second, how do we overcome the overwhelming odds against full-time alignment? Consider this:

A single AI that misaligns only once can trigger serious consequences. Humans on the other hand require full-time alignment to avoid any such situation.

Hm, that’s an impossible one. What’s more probable: a single misalignment once—or eternal full alignment? You do the math.

That’s why it’s called AI Alignment Problem. But that’s not even the biggest issue.

The True Challenge: Unaligned Humans ¶

Let’s take a step back here and look at this from a historical point of view. Using the Cosmic Calendar as a scale (that maps the time from the Big Bang until today onto a single calendar year), Columbus has discovered America just 1.2 seconds before midnight of December 31. On this scale, AI has been in development for the last 0.1 seconds of the Cosmic Calendar. In contrast, modern humans have evolved a whopping eight minutes before that.

In other words, trying to align natural intelligences (i.e., humans) is something we’ve been practicing around four thousand times longer than we’ve been trying to align artificial and natural ones. And, after all this time, I can state with a high degree of confidence: Natural intelligences are not yet aligned at all. Especially today, we can observe polarization (a synonym for misalignment) of human societies on every level. Throughout humankind as we live and breathe today, we still can’t agree on a single thing of global relevance.

If we haven’t managed to align natural intelligences so far, how are we supposed to align natural and artificial intelligences?

To make matters worse: So far, we’ve failed at aligning intelligences of our own kind. Intelligences that—while we still don’t fully comprehend them—are the most familiar to us.

Can the Unaligned Earthlings Align the Unaligned Alien? ¶

Being aligned as humans is the absolute prerequisite for solving the AI Alignment Problem. The big tech companies at the forefront of developing AI would have to be the first to be aligned. (Remember: We’re talking about AIs, plural.)

Today, that’s an impossibility due to the incentives not being in sync: Nobody gets rewarded for getting aligned. On the contrary, developing a competitive advantage and precisely not falling in line is what brings the biggest rewards. We cherish diversity and individuality in some way, shape, or form.

Being the inveterate optimist that I am, I believe that humankind will solve this problem. I also believe that we’ll solve it in a way we can’t imagine just yet. (It’ll somehow involve synchronization of incentives, I can tell as much.) I see the great value in Large Language Models and hope that we’ll be able to harness it at acceptable risks.

This article serves as a call to focus our work primarily at aligning ourselves in matters of upmost importance. It’s the necessary step we need to take before all else.

Conclusion ¶

AIs are developed at neck-breaking speeds and achieve impressive levels of intelligence already today. The problem is: Nobody really knows how they work—and humankind has no experience dealing with globally deployed technologies that nobody really understands.

This article takes a stab at the proposed solution of AI Alignment. It shows the impracticability or even impossibility of the solution, at least as long as the human side of things is as misaligned as it currently is. Let’s try to fix that first by taking a long, hard look at how our incentives are currently structured.

Michael Schmidle

Founder of PrioMind. Start-up consultant, hobby music producer and blogger. Opinionated about technology, strategy, and leadership. In love with Mexico. This blog reflects my personal views.

Jan 22, 2025 · 8min read

The Vanishing Point—Why AGI Might Never Exist

What if AGI (Artificial General Intelligence) is impossible not because we can’t achieve it, but because it can’t exist as a stable state? A dialogue about processing speed, dimensional transcendence, and why we might be playing Russian roulette with our civilization’s future. Continue…

Mar 8, 2020 · 4min read

Are We Innovating Our Stagnation?

Today’s global challenges require humankind to learn and adapt. Artificial Intelligence will help driving these changes, right?—Maybe not. Continue…

Jul 3, 2025 · 4min read

Beyond Prompt Engineering: The Case for Evolving Context

What if the future of AI collaboration isn’t about better prompts or engineering context, but about systems that learn to learn better and co-create context? Continue…